Backfill events with S3 pull

AI summary

About AI summaries.

You can use the S3 pull integration to ingest historical data from an Amazon S3 bucket into Imply Lumi. The process, often called backfill ingestion, involves manually specifying which objects to ingest. You provide an S3 bucket with optional prefix and suffix filters to limit what gets ingested. Lumi ingests events from the objects matching the specified filters.

You can ingest S3 data through the Lumi UI or the API.

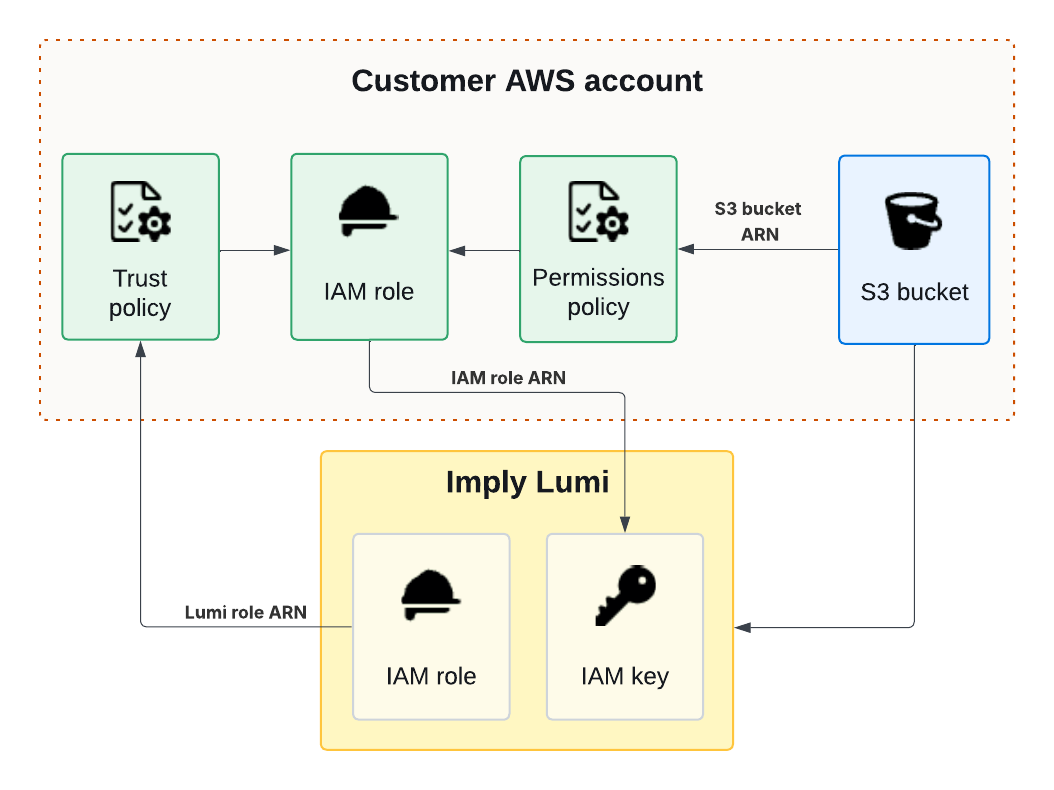

The following diagram shows how AWS services and Lumi interact to send events through backfill ingestion:

This topic provides details to configure backfill ingestion using the S3 pull integration.

Prerequisites

Before you continue, complete the steps in Send events with S3 pull to configure AWS access and create a Lumi IAM key.

Confirm that your events are in CSV, JSON, or plain text format.

Backfill job behavior

When you submit a backfill job, Lumi validates your S3 permissions, then identifies which objects to ingest based on your filters (Discovery), and finally ingests them (Processing).

Before creating backfill jobs, note the following constraints and behavior:

- Limit your job to a maximum of 1,000,000 objects. If you exceed this amount, Lumi doesn't proceed with ingestion. Create multiple smaller jobs, or refine your filter to reduce the size of your job. For details, see Reduce job scope.

- It can take time for a job to begin, depending on the volume of discovered objects and any backlog of existing backfill requests.

- Avoid creating backfill jobs with the same specification. This can lead to duplicate events.

- Lumi assigns the user attribute

filenameand the system attributecorrelationIdto each event from a backfill job. Events from the same job have the same correlation ID. For more information, see S3 pull attributes.

Create a job in the UI

Create a backfill job using the Lumi UI:

-

From the Lumi navigation menu, click Integrations > S3 pull.

-

In Select the job type, click Backfill.

-

Select your IAM key.

-

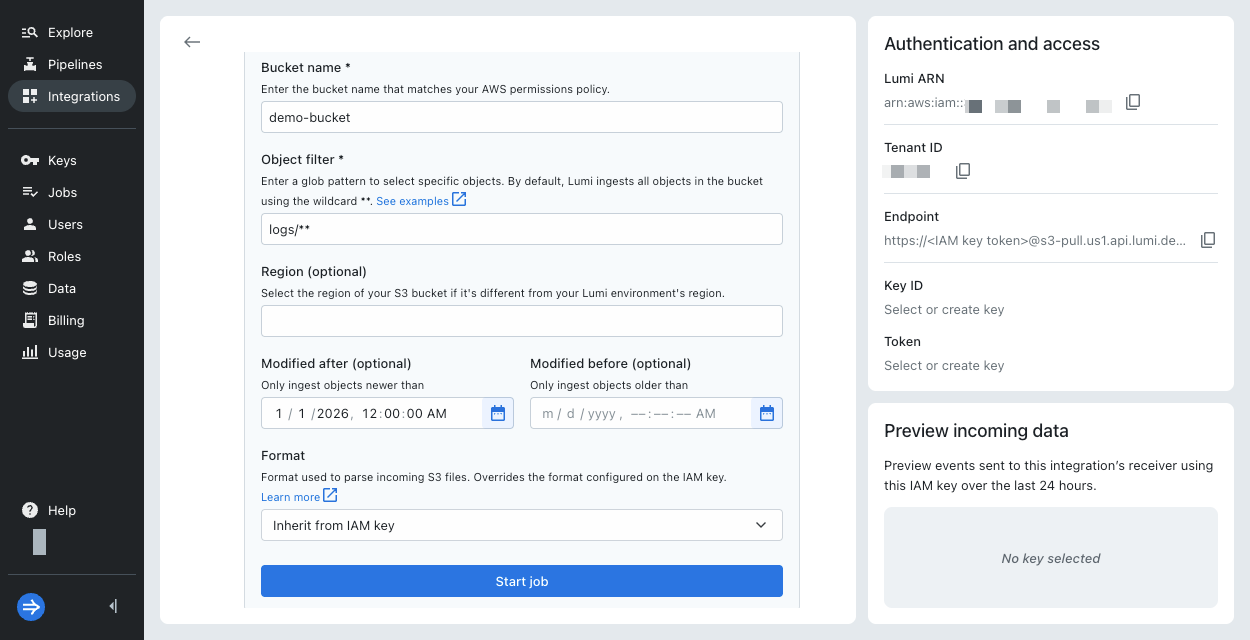

In Create a new job, enter the following details. The job requires the bucket name and object filter; all other fields are optional.

-

Bucket name: Name of the S3 bucket containing the data.

-

Object filter: Glob pattern for object keys that defines which objects to include when ingesting data. The pattern must match the entire object key. See S3 object filters for examples.

infoAs a best practice, use the most specific prefix that matches your objects. This can help speed up discovery time and avoid reaching the maximum object limit.

-

Region: AWS region of your S3 bucket. By default, it assumes the same region as the Lumi environment.

-

Modified after: Start date in ISO 8601 format. Only include objects that were created or modified after this date.

-

Modified before: End date in ISO 8601 format. Only include objects that were created or modified before this date.

-

Format: Format of the events in the objects. By default, the job inherits the event format from the IAM key. If the IAM key doesn't specify one, Lumi automatically detects the event format.

-

-

Click Start job. Note that ingestion might not begin immediately. See Backfill job behavior.

-

In Preview incoming data, view the events coming into Lumi. Lumi automatically refreshes the preview pane to display the latest events.

-

Click Explore events to see more events associated with the IAM key. Adjust the time filter to choose the range of data displayed.

Create a job by API

You can use the S3 pull API to ingest event data from an S3 bucket on demand.

To start a backfill job, send a POST request to the /ingest endpoint.

Replace S3_PULL_BACKFILL_ENDPOINT with your Lumi endpoint URL.

You can find this URL in the Authentication and access pane of the S3 pull backfill integration in the UI.

curl --location 'S3_PULL_BACKFILL_ENDPOINT' \

--header 'Content-Type: application/json' \

--data '{

"bucket": "BUCKET_NAME"

}'

Include the S3 bucket name in the request body. All other body parameters are optional.

View request body parameters

-

bucket- Type: string

- S3 bucket name containing the data to backfill.

-

pattern- Type: string

- Pattern that defines which objects to include when ingesting data. By default, Lumi ingests all objects in the S3 bucket. See S3 object filters for details.

-

region- Type: string

- AWS region of your S3 bucket. For example,

us-east-1. By default, Lumi assumes the same region as the Lumi environment.

-

modifiedAfter- Type: string

- Start date in ISO 8601 format. Only include objects that were modified after this date and time. For example,

2025-01-01T00:00:00Z.

-

modifiedBefore- Type: string

- End date in ISO 8601 format. Only include objects that were modified before this date and time. For example,

2025-01-31T23:59:59Z.

-

format- Type: string enum with options

csv,json,splunk_csv,splunk_hec,plain - Format of events in the S3 objects. By default, Lumi uses the format on the IAM key else auto-detects the format.

- Type: string enum with options

-

formatOptions-

Type: object

-

Options for custom CSV format. Only applies when

formatiscsv. Schema:"formatOptions": {"type": "csv","delimiter": <string field delimiter>,"headers": <array of header names>,"skipHeaderRows": <integer number of header rows to discard>}For example:

"formatOptions": {"type": "csv","delimiter": "\t","headers": ["timestamp", "message", "host"],"skipHeaderRows": 1}

-

Sample request

The following example shows how to ingest data from the example_logs.bz2 file in the example-bucket S3 bucket:

curl --location 'https://60252ae2-c123-4d56-b78f-910112bef518@s3-pull.us1.api.lumi.imply.io/ingest' \

--header 'Content-Type: application/json' \

--data '{

"bucket": "example-bucket",

"pattern": "logs/example_logs.bz2",

"region": "us-east-1",

"modifiedAfter": "2025-01-01T00:00:00Z"

}'

A successful request returns an HTTP 200 OK message code and the task ID (taskId) in the response body.

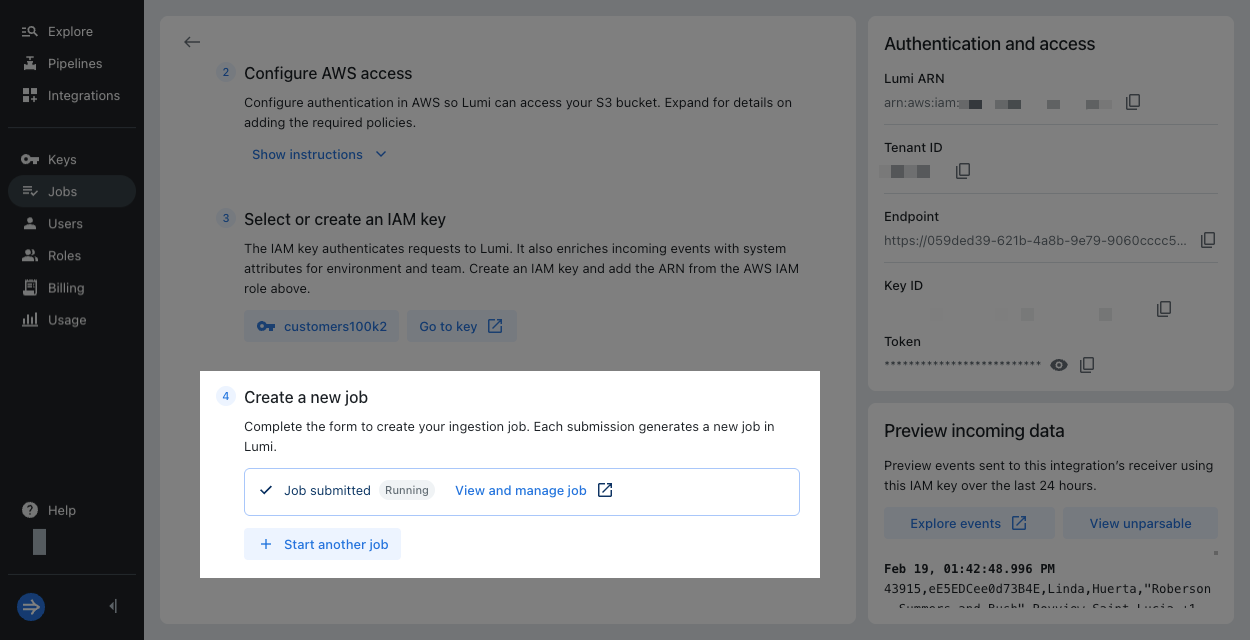

View job status

If you use the Lumi UI to submit a job, Lumi displays the status of the job. Click View and manage job for more details on the Jobs page. There, you can see the progress of discovery and processing for the job. You can also cancel the job and view past jobs. For more details, see View and manage jobs.

Check Lumi for events

You can preview the incoming data in the Lumi UI:

- From the Lumi navigation menu, click Integrations > S3 pull.

- Click Backfill and select your IAM key.

- In Preview incoming data, view the events coming into Lumi. Lumi automatically refreshes the preview pane to display the latest events. The preview pane only shows events with timestamps in the last 24 hours.

- Click Explore events to see more events associated with the IAM key. Adjust the time range selector to filter the data displayed.

Once events start flowing into Lumi, you can search them. See Search events with Lumi for details on how to search and Lumi query syntax for a list of supported operators.

If you sent events but don't see them in the preview pane, search for them in the explore view. Filter your search by the time range that spans your event timestamps. For information on troubleshooting ingestion, see Troubleshoot data ingestion.

S3 object filters

You can define filters for S3 object keys to control which objects to ingest.

In the Lumi UI, specify a pattern in the Object filter field.

In the API, include the pattern field in the request body.

The following table lists the glob patterns supported by Lumi:

| Pattern | Description | Example |

|---|---|---|

** | Matches zero or more path segments | logs/**, **/*.json |

* | Matches any characters except path separators | logs/2025-10-*.json |

? | Matches exactly one character except path separators | logs/demo-logs-?.json |

[abc] | Matches any character in the set | logs/[aeu]*_logs.* |

[a-z] | Matches any character in the range | logs/[a-z]*_logs.* |

[!abc] | Matches any character not in the set (negation) | logs/[!aeu]*_logs.* |

{a,b,c} | Matches any of the alternatives (brace expansion) | logs/*.{bz2,gz} |

Reduce job scope

If your object filter matches against a large number of objects in your S3 bucket, it can take a long time for Lumi to discover what to ingest. For example, with billions of objects to iterate over, a job can take several hours in Discovery before moving onto ingestion in Processing.

To reduce the scope of discovery, modify the prefix in your object filter to be more specific, or create multiple jobs of smaller scope.

Only a more specific prefix reduces discovery scope.

Other filter patterns, such as glob wildcards, apply after Lumi iterates over all matching objects.

For example, logs/**/*.json requires evaluation of the same number of objects as logs/**.

Consider an example scenario of an S3 bucket organized with the following structure:

logs/

├── access/

│ └── 2026/

│ └── 01/

│ ├── 01/

│ └── 02/

├── firewall/

└── system/

Example 1: Ingest all logs in multiple smaller jobs

Instead of a single backfill job that uses the filter logs/**, you can initiate three backfill jobs with more specific prefixes:

logs/access/**

logs/firewall/**

logs/system/**

Example 2: Ingest only firewall and system logs

To ingest firewall and system logs only:

logs/{firewall,system}

Example 3: Ingest access logs

If you want to only ingest access logs, you can include the structured date format in the filter:

logs/access/{YYYY}/{MM}/{DD}

If you specify Modified after or Modified before, Lumi can optimize discovery when the object filter contains a standardized date pattern (YYYY, MM, DD, HH).

For example, if you limit objects to those modified after 2026/01/01 and before 2026/02/01, the date range applies on top of the object filter so that Lumi only discovers objects equivalent to logs/access/2026/01/{01..31}.

If you don't include the date pattern in the object filter, such as logs/access/**, Lumi iterates through everything in the access/ folder then compares it against the modified date range.

This significantly increases the number of objects Lumi must evaluate during discovery.

Learn more

See the following topics for more information:

- Recurring ingestion for recurring ingestion from an Amazon S3 bucket to Lumi.

- Transform events using pipelines for information on how to transform events in Lumi.

- Send events to Lumi for other options to send events.

- Cloud regions for Lumi regions and their AWS equivalents.