Connect to Azure Blob Storage

To ingest data from Azure Blob Storage into Imply Polaris, create an Azure Storage connection and use it as the source of an ingestion job. Create a unique connection for each Azure Blob Storage container from which you want to ingest data.

The Azure Blob Storage connection also supports access to Azure Data Lake Storage Gen2.

This topic provides reference information to create a connection to Azure Blob Storage.

For an end-to-end guide to Azure Blob Storage ingestion in Polaris, see Guide for Azure Blob Storage ingestion.

Create a connection

Create an Azure Blob Storage connection as follows:

- Click Sources from the left navigation menu.

- Click Create source and select Azure Storage.

- Enter the connection information.

- Click Test connection to confirm that the connection is successful.

- Click Create connection to create the connection.

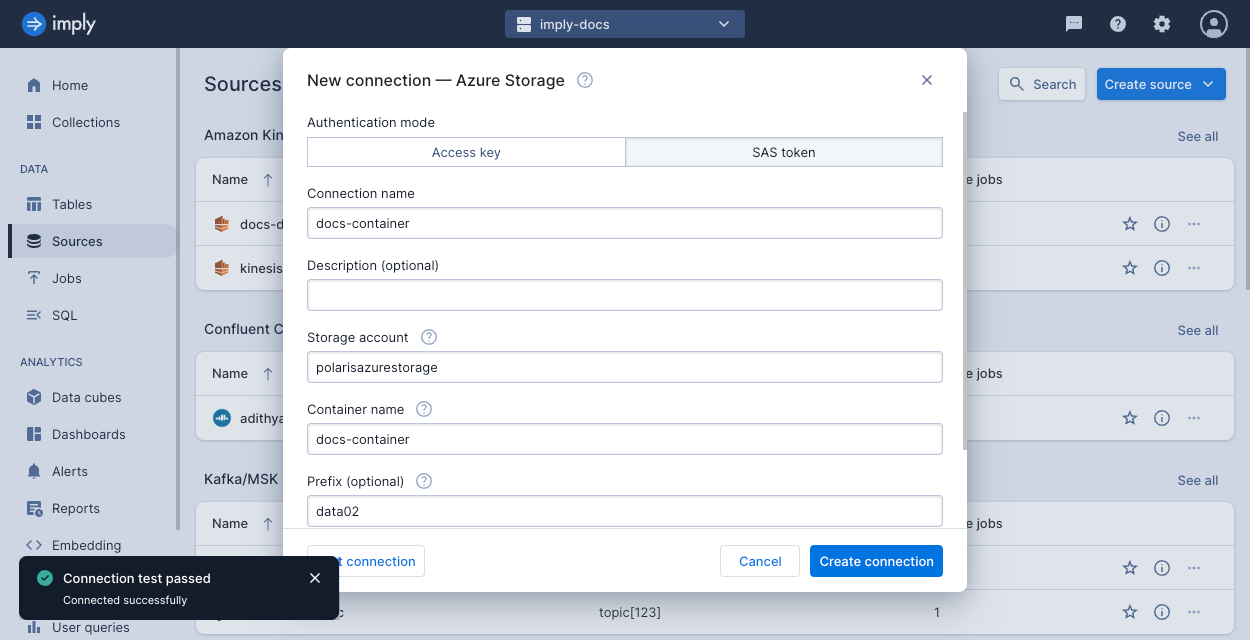

The following screenshot shows an example connection created in the UI. For more information, see Create a connection.

Connection information

Follow the steps in Create a connection to create the connection. The connection requires the following information from Azure:

Storage account name: Name of the storage account in Azure Storage. The storage account should exist before you create the connection. Ensure that the networking settings for the storage account enable public network access for all networks, or to Imply specifically (IP address

172.171.87.178). For more details, see the Azure documentation on configuring network access on a storage account.Container name: Name of the container in the storage account. The container should exist before you create the connection.

Prefix (optional): Specify a prefix if you want to limit access to designated files in the container. The connection will be limited to the set of files matching this prefix.

For example, if the container contains the following blobs,

file1,file2,folder/file3,folder/file4, then a prefix offolderwould make onlyfile3andfile4available through the connection.Authorization to access the storage account. For Polaris to access the contents of your storage account, supply a storage account access key or a SAS token for the storage account (recommended). For examples of setting up authentication using either method, see the ingestion guide.

- To authorize using a storage account access key, ensure that your storage account enables Allow storage account key access. Supply your access key in the dialog to create the connection.

- To authorize using a shared access signature (SAS) token (recommended), generate a SAS token for the storage account, then provide the SAS token including the delimiter character

?. The SAS token must meet the following criteria:- The SAS applies to the storage account level. You cannot create a valid connection when the SAS is created on the container or object level.

- The SAS allows access to the container and object resource types, with read and list permissions.

- The SAS is immediately usable. Note that clock skew may occur when you set the start time for a SAS to the current time. If the current time is past the SAS end time, generate a new SAS token and update the connection.

Learn more

See the following topics for more information:

- For an end-to-end guide to Azure Blob Storage ingestion in Polaris, see Guide for Azure Blob Storage ingestion.

- To learn how to ingest data from Azure Blob Storage using the Polaris API, see Ingest data from Azure Blob Storage by API.

- For details on how to ingest metadata pertaining to the Azure blobs, see Ingest object metadata.